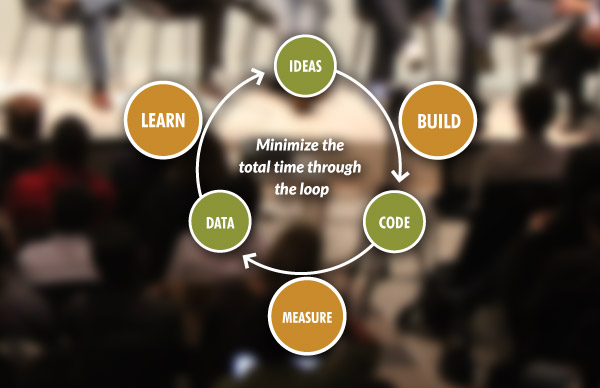

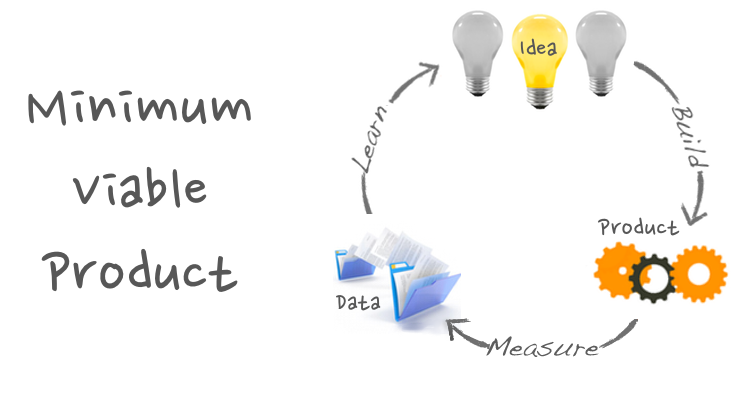

The validated learning (VL) process is one of the pillars of the lean startup movement, initiated by Eric Ries.

This process, based on the customer validation concept developed by Steve Blank, is derived from the lean manufacturing philosophy. Its framework is based on the assumption that we need to first validate our basic ideas with a minimum amount of effort before falling into the trap of developing a full blown service which no customer actually wants.

The validated learning process consists of a few essential steps. The first one is defining a hypothesis and actionable metrics, based on which the thesis will be validated. The next element of the process is the development of a minimum viable solution to either support or to reject the hypothesis during the experiment phase. The experiment phase lasts for a defined period of time and finishes with conclusions, on which the solution can be developed further, abandoned partly or even discarded completely.

In this article, I want to show you how you can benefit from validated learning process and give you an example of how we use it in CayenneApps to make your product even better.

Why do I need validated learning?

Validated learning as defined by Ries has a few powerful advantages over a traditional product release cycle:

- The development process can be shortened substantially. Using validated learning, you can avoid developing unwanted features of your product.

- Because of the shorter development process, the cost of building a sufficient version of a product can be significantly lower.

- The process puts the focus on meaningful and actionable metrics, which can eventually lead to creating a better, more customer-oriented product.

- Validated learning facilitates agility. Because its foundation is the building of minimum viable solutions, your company can develop a better level of flexibility to adapt to the ever-changing environment.

How do we engage in the validated learning process?

Validated learning is all about making small steps and verifying quickly whether the chosen direction is correct. When we begin producing a new product we have a head full of assumptions that we want to test. However, in the later stages of the product development, sometimes we forget to keep our experiments going. Here is where validated learning can be helpful and can remind us about this essential need. Ultimately, product development is a never-ending stream of experiments.

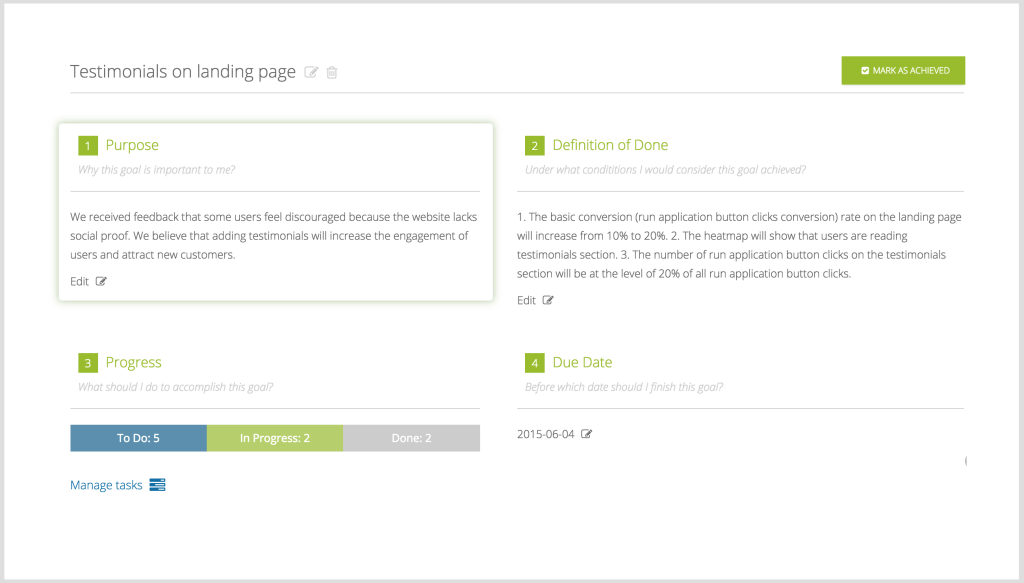

Every our experiment starts with a hypothesis. In CayenneApps, we are used to writing down these hypotheses as goals. It helps us not only to define them more carefully but also to manage a series of experiments more easily.

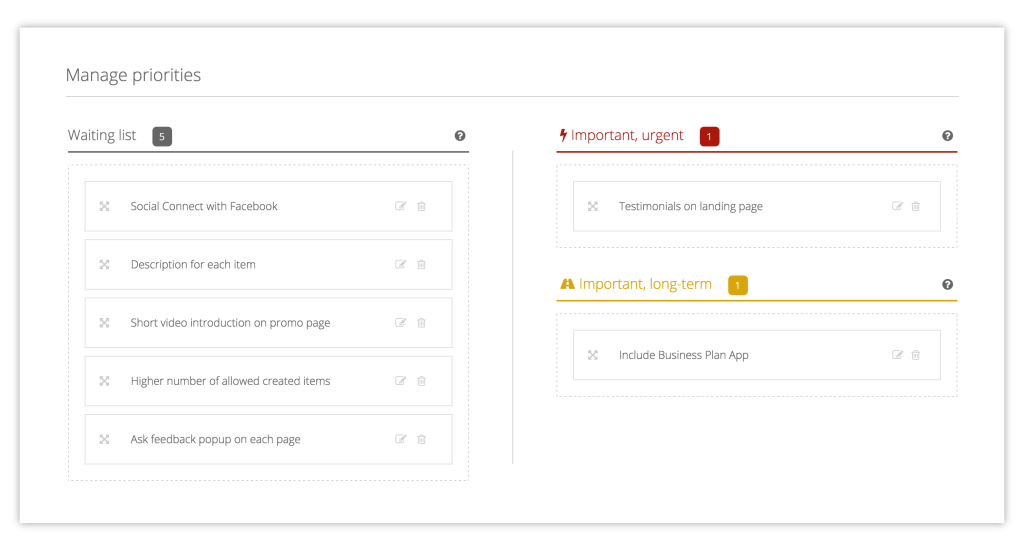

When I say manage, I think prioritize. Thankfully or not, not all of your hypotheses are equally essential to product development. Some of them are “to-be-or-not-to-be” kinds of problems, but others just make our product slightly better. The ugly truth is that we need both.

So, we use the pattern that we have developed in our Goals module and categorize experiments as urgent or long-term. Hypotheses, the results of which have an immediate and big impact on our product are labeled as urgent. Others, which are particularly important in the long run, are marked as long-term. The rest end up in the “waiting list”.

What we need in experiments are numbers. Therefore, hypotheses are usually combined with some actionable metrics which describe the exact checks which will help us to test whether our thesis is correct. There are no good experiments without good metrics — true, but I want to push this idea a bit further…

Defining metrics is not enough

Behind each hypothesis, there is a kind of reasoning. If there wasn’t, we might be in serious troubles. The point of running experiments is not the very process of conducting the experiments in and of itself. As honest entrepreneurs, we must admit that we are not students in a laboratory class.

We may want to enhance our product with one extra feature, because we want to see whether a particular market exists for it. We want to know this either because we want to make money or to make the world a better place. This root cause is crucial. Knowing why we are conducting the experiment is essential.

In CayenneApps, we write down the reasoning behind all of our experiments. Only later do we fill in all the other details. Due to our experience in working with Scrum projects, we use the “definition of done” term to describe the metrics which we will use to determine whether the hypothesis is correct. We put it in simple, yet concrete sentences like this: “The retention of users from USA will grow from 40% to 50%.”

This is how we know what to measure and what result to expect.

The other thing to note is that poor experiments have a tendency to take too much time without any real conclusions. This does not mean that we should put a strict deadline on each experiment, and after passing this date shut it down arbitrarily. But we should at least define some timeframe for it, no matter whether it will be 3 weeks, 3 months or — I hope not — 3 years.

In CayenneApps Goals, we have a special field for each goal: “the due date”. This field defines when we should check how the experiment is going. For us it is not actually the due date, it is merely a checkpoint and should be treated that way. By the way, just because setting one date is too restrictive, we want to get rid of that field in the future releases of our product and replace it with the list of time-related milestones.

Up and running

Even though planning can be fun, the experiments are all about getting our hands dirty. Just like a regular scientific experiment, the product experiment which needs to be proved right or wrong requires a lot of work. In Cayenne, when we add a new feature, we use the 5Ds framework.

- Define it,

- Design it,

- Develop it,

- Deliver it,

- …and — I know it sounds out of place - Data-verify it.

Behind each of these steps lies a list of activities that need to be done. In Cayenne, because we would like to have them all in one place, we write down each activity and add it to the particular goal as a task. Then, when we open our Kanban board we can see all of our current tasks at a glance. This helps us to have our finger on the pulse all the time and monitor the progress.

When the new feature arrives we can start gathering the data and, after let’s say six weeks, we can finally draw some conclusions. Simply speaking, we can now test our hypothesis.

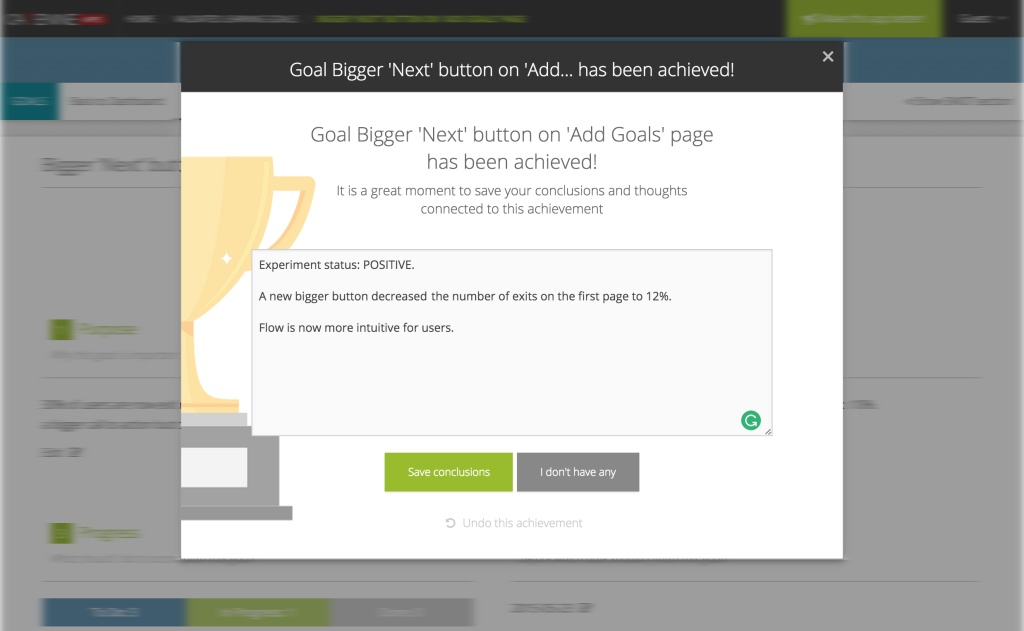

The truth is that it doesn’t matter whether the hypothesis is 100% correct or not. It is not a mathemetical theory. The biggest error is to check the data, mark the hypothesis as true or false and call it a day. Why? Validated learning is all about, surprise, surprise, …learning! That is why, in Cayenne, after finishing an experiment, we focus on gathering all the data that we have learned during its execution. Filling in conclusions gives us a couple of minutes to summarize what we learned about our product, our technology, and of course, our customers.

But we are still not done. Very often, we use these conclusions as a starting point for defining a new experiment — an experiment which can be defined exactly as a the previous one and prioritized the same way. As in lean manufacturing, the process goes full circle. Until there are users, the process of product development goes on.

Final thoughts

To sum up:

In CayenneApps, we try to use it on a regular basis and I am sure that you are now ready to try it by yourself. If you would like to see an example of a few defined experiments, I have added a sample set of goals to our CayenneApps Examples Library, which you can find here. Check it out! And most of all, happy experimenting!

Great post - I like the 5D framework and structured way of running experiments - DOD and purpose are important and help to create meaningful and not artificial experiments. What I learned is that we learn even more during creating the experiment as we need to focus on the purpose and how to measure it. This will already help in the beginning.

I fully agree with the time frame setting.

Thanks